Legal AI in 2026: What Really Matters Now and in Future

Table of contents

In boardroom meetings, the question comes up often enough: "What does Legal AI actually deliver?" And in many legal departments, there is still no honest answer. Partly because several tools are already in use, but also because no one is systematically measuring whether they work. This is exactly where most legal departments find themselves today. Not out of indifference, but because the market is growing faster than the ability to evaluate it. The numbers are impressive: within a single year, the use of generative AI in corporate legal departments more than doubled, from 23% to 52%, across a survey of 657 legal teams in 30 countries (ACC/Everlaw 2025). Legal tech funding exceeded the $6 billion mark for the first time in 2025.

What Has Actually Changed

Not everything sold as a Legal AI trend in 2026 holds up to scrutiny. Some developments, however, are no longer promises – they are operational facts with immediate consequences for the day-to-day work of a legal department.

1. The EU AI Act is no longer a compliance project. It is the daily reality.

Since February 2025, certain AI applications have been prohibited in the EU, and all companies using AI must ensure that their employees understand what they are doing and what they are not. Since August 2025, providers of large AI foundation models have also been regulated; violations risk fines of up to €35 million or 7% of global annual turnover. The most stringent rules follow in August 2026, covering AI systems used in sensitive areas such as HR decisions or critical infrastructure. On top of this, the EU has linked the new AI law to existing data protection and cybersecurity obligations. The regulatory frameworks interlock and reinforce each other.

For legal departments, this means a dual responsibility: securing the team's own use of AI while simultaneously guiding the entire organisation through this regulatory framework. Anyone who has not yet built both into their ongoing workload is already behind.

2. Training employees in AI is no longer a voluntary initiative. It is a legal obligation.

The new European AI Act requires all companies using AI to ensure that their employees have an adequate understanding of it. Violations can be treated as a breach of due diligence. The reality: 53% of law firms have no clear rules for AI use, or do not even know whether such rules exist (Clio Legal Trends Report 2025).

For the legal department, this creates an unusual but strategically valuable role. It is often the only part of the organisation that can credibly enforce this obligation towards IT, HR, and operations. That influence should be used actively.

3. Anyone evaluating a standalone AI chatbot today is looking at the wrong product.

In the ABA Legal Industry Report 2025, 43% of respondents cited integration into existing workflows as the most important purchasing criterion, far ahead of features or model quality. All major vendors are now building products that integrate directly into existing software rather than functioning as standalone applications.

The key question is therefore no longer "What can it do in the demo?", but: "Is it embedded in the contract workflow, contract management, and compliance work?" Anyone who has not yet formulated this as a requirement is buying past what actually makes the difference.

So much for the good news. Two of the industry's loudest promises deserve considerably more scepticism.

3. "Open markets instead of vendor dependency"

This is the friendly narrative of a market doing precisely the opposite. Clio acquired legal database provider vLex for $1 billion. Harvey, one of the best-known Legal AI providers, raised $760 million in a single year. In 2024, there were over 47 major acquisitions in the legal tech sector, more than double the number three years prior (LegalTech Management Group). What is marketed as an open ecosystem is, in practice, often a platform with growing dependencies.

The consequence is concrete: every contract with a Legal AI provider should from the outset include terms covering how your own data can be exported and taken along if you switch providers. Anyone who neglects this today will be negotiating from a weak position in three years.

4. "Legal AI finally delivers measurable ROI"

Thomson Reuters reports that over 50% of organisations with an AI strategy see ROI. A Forrester study commissioned by LexisNexis calculates a 284% return over three years. Such studies are, without exception, commissioned by the vendors themselves. The independent reality looks different: only 20% of law firms systematically assess whether their AI investments are creating value (Bloomberg Law 2025). Gartner also projects that over 40% of all agentic AI projects, those where AI autonomously executes tasks, will be discontinued by the end of 2027. For the next budget round, this means: anyone defending AI spending needs numbers that do not come from a vendor. Anyone without their own measurement methodology today will be under pressure in twelve months.

The Four Blind Spots

There are four developments almost entirely absent from most trend reports, yet operationally the most consequential for legal departments. They range from concrete liability risks to a quiet shift in how the next generation learns, and they are more closely connected than they first appe

1. The hallucination problem: courts are losing patience

AI systems sometimes invent sources, rulings, or facts that do not exist. This is well known. Less known is the scale: a database maintained by HEC Paris documents 486 such cases before courts worldwide, 324 in the US alone. Courts are responding: in July 2025, a US federal court declared that financial penalties alone "are proving ineffective" (Johnson v. Dunn). A California appeals court further indicated that lawyers may in future be required to actively identify and report AI-generated errors made by opposing counsel.

For legal departments, this means: human review of all AI outputs is not an optional process step. It is a professional obligation that must be embedded as a firm requirement across the team. Clear rules on who reviews AI outputs and how escalation works belong in every internal AI policy.

2. Confidentiality must be guaranteed: the US ruling of February 2026

Judge Rakoff decided in United States v. Heppner (S.D.N.Y.) that documents created using generative AI do not enjoy protection under attorney-client privilege when the tools used offer no contractual confidentiality guarantees. The reasoning is straightforward: generative AI is not a lawyer, and many providers reserve the right to use input data to train their models. Anyone entering confidential information therefore has no reasonable expectation that it will remain so (Arnold & Porter, 2026).

This is not a uniquely American problem. Anyone who enters sensitive contract, transaction, or personnel data into generative AI that is not contractually bound to confidentiality risks a breach of European data protection law. Specialised Legal AI solutions that guarantee neither to store data nor to use it for model training, and that are operated on servers within Europe, are therefore not a premium option for a legal department. They are a requirement.

3. The cost paradox at law firms

Law firms are investing heavily in AI. They are not getting cheaper as a result. In the first half of 2025, their operating costs rose by 8.6%, with hourly rates increasing by 9.2%. The hundred largest US law firms crossed the $1,000-per-hour threshold for the first time. The efficiency gains from AI are not being passed on to clients; they are financing the firms' own technology build-out. The fact that 39% of law firms announced flexible fee arrangements for 2024, but only 9% actually introduced them, speaks for itself (Bloomberg Law 2025). Legal departments waiting for cheaper external advice thanks to AI are waiting in vain.

This is not an argument against AI – but it is a strong argument for investing in internal capabilities today, rather than waiting for external efficiency to trickle down at some point.

4. AI is changing how junior lawyers learn

Goldman Sachs estimates that 44% of legal tasks are automatable. A 2025 National Bureau of Economic Research study shows, however, that AI tools have so far had barely measurable effects on working hours or income. The average time saving of 3% is largely consumed by error correction. The real problem lies elsewhere: junior lawyers who have never worked through routine tasks such as contract review or legal research themselves develop fewer foundational legal skills. Some of the largest US law firms have already restricted AI use for junior associates, in order to assess their capabilities before delegating work.

For legal departments, this raises the question of how the legal judgment of the next generation will be developed, when the tasks on which it is built begin to disappear.

Regional Dynamics and AI Adoption

These four blind spots do not exist in a vacuum. Their relevance also depends on where a legal department operates, and the regional dynamics are more surprising than most reports suggest.

Europe is often assumed to be held back by regulation. The numbers say otherwise. European in-house lawyers show the highest AI adoption rate worldwide at 61%, more than their US counterparts (ACC/Everlaw 2025). The paradoxical driver is the AI Act itself. It creates clarity about what is permitted, and gives legal departments a structured case for investments that would otherwise be harder to push through internally. The downside: depth of use remains shallow. 56% of European legal departments use general tools such as ChatGPT, but only 14% have implemented specialised Legal AI solutions (Wolters Kluwer/Legisway 2025). Adoption is fast, but thin. This is a real opportunity for those who invest in genuine process integration now.

Switzerland is deliberately taking its own path. The Federal Council has adopted a risk-based AI strategy without directly incorporating the European AI Act. The revised Data Protection Act has applied to AI applications since September 2023. For Swiss legal departments with business in the EU, this creates a dual compliance regime: anyone selling into the EU or employing staff there must also understand European AI law. The market is responding: Swiss-Noxtua, a Legal AI system operated on Swiss servers, raised €80.7 million in funding. Data sovereignty – control over where and how legal data is processed – is no longer an ideological position. It is a product category in its own right, with growing demand.

The rapid adoption across Europe shows that generative AI was introduced quickly. When it comes to specialised Legal AI solutions with a focus on legal precision and data security, however, most legal departments are still in a learning phase. And systematic measurement of efficiency or productivity gains is almost entirely absent.

What This Means for Legal Departments Through 2030

The next phase will not be defined by new technology, but by its structural impact on the relationship between the legal department, external law firms, and the wider organisation. Three shifts are emerging, and all three favour legal departments that lay the right foundations today.

1. Dependence on external law firms is declining

78% of in-house teams plan to bring contract drafting in-house. 71% want to manage contract management internally. 64% of all in-house teams expect their reliance on external law firms to decrease (ACC/Everlaw 2025). The CEO of Clio, one of the largest legal software providers, argues that the classic hourly billing model is structurally incompatible with the productivity gains that AI enables. He is right.

The power shift in favour of the legal department, discussed for years, may now be reaching its genuine tipping point. Not through one defining moment, but through a hundred small decisions: keeping this task in-house, not sending that matter out, handling this contract internally.

2. Generic AI is losing strategic relevance

Specialised, company-owned context is becoming the competitive advantage. The National Law Review projects highly specialised AI products by the end of 2026, for example in corporate transactions, patent filings, and employment law. This makes proprietary contract templates, precedents, and clause libraries the strategic capital that determines the quality of AI outputs.

Legal departments that structure and make accessible their contract data today are building the advantage that others will still be trying to catch up to.

3. The legal department becomes the architect of AI-powered legal functions

Bloomberg Law describes the transformation of the legal department into an "architect of AI-powered legal functions": a unit that no longer merely delivers legal advice, but designs the infrastructure through which the organisation makes legal decisions. Bjarne Tellmann, author of Law in the Era of AI, puts it precisely:

"AI will absorb more routine work. But that does not diminish the importance of the legal department. It increases it."

What no AI can replicate remains exactly what makes the difference: judgment in ambiguous situations, strategic assessment in politically charged moments, and the trust of the organisation's leadership.

Not every promise of the past year will materialise. But the structural shifts – more work brought in-house, deeper specialisation, a stronger standing for the legal department – are real and accelerating. The only question is who shapes them actively.

Four Key Takeaways

1. More control and less dependence on external law firms

78% of legal departments worldwide plan to bring contract drafting and contract management in-house. AI makes this realistic. Less outsourced, more self-directed.

2. The competitive advantage lies in your own knowledge, not in the tool

Generic AI does not know your organisation. Specialised Legal AI solutions that work with your own contract templates, precedents, and internal policies deliver significantly better results. Anyone who structures and makes their legal knowledge accessible today is building an advantage that others cannot simply buy.

3. Security comes first

We may only use solutions that contractually guarantee our data will not be shared. A US court ruled in February 2026 that generative AI without such a guarantee puts the confidentiality of legal work at risk. We also need clear internal rules: which tools, with which data, reviewed by whom.

4. We must invest in AI competence

Since February 2025, this is a legal obligation. What is needed is the ability of the entire team to critically assess AI outputs and to know when human review cannot be replaced. Anyone who builds this competence today will be able to use more sophisticated AI systems tomorrow – those that autonomously take on tasks. Legal departments without this foundation will not be able to deploy the next level of AI safely.

Conclusion

Most legal departments have been through the first wave. They have tested generative AI, discussed concerns, and gathered initial experience. But generic AI that does not know your organisation is only the beginning. It is not a genuine competitive advantage. That emerges where specialised AI solutions meet proprietary legal knowledge: contract templates refined over years, clauses that have stood the test of time, internal policies that carry the organisation's history. This knowledge cannot be copied. And it is the only foundation on which Legal AI becomes genuinely better than the competition.

Investing in the AI competence of the team today means preparing for what comes next: AI systems that take on tasks autonomously. The legal departments that will deploy them with confidence are already laying the groundwork. Not in technology, but in people who understand what AI can do – and what it cannot.

Explore Related Insights

More articles related to this topic

Legal AI & Legal Tech Trends 2024

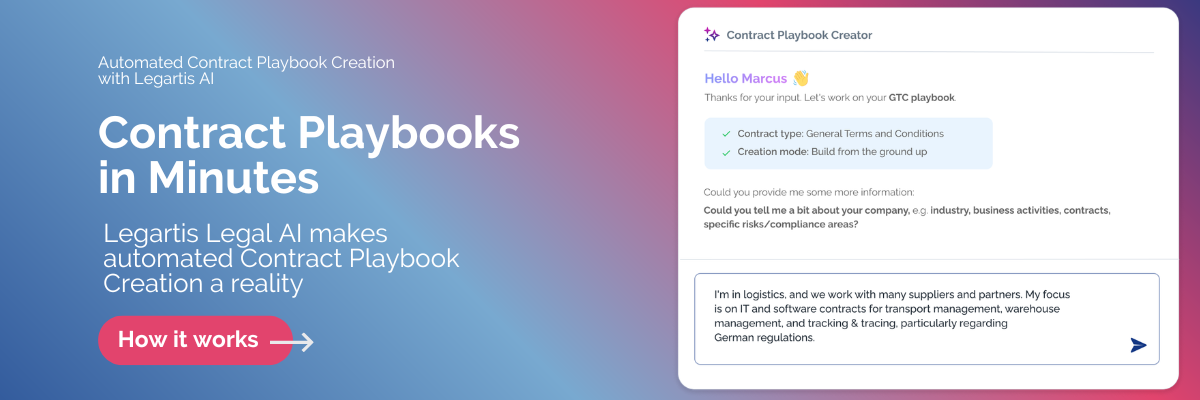

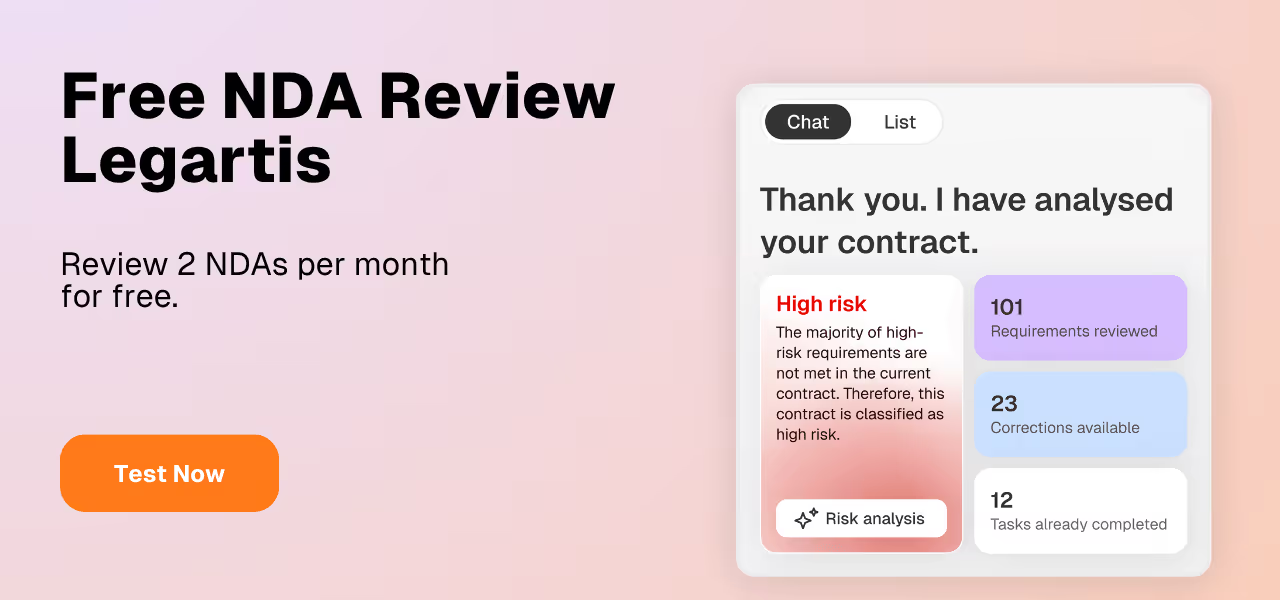

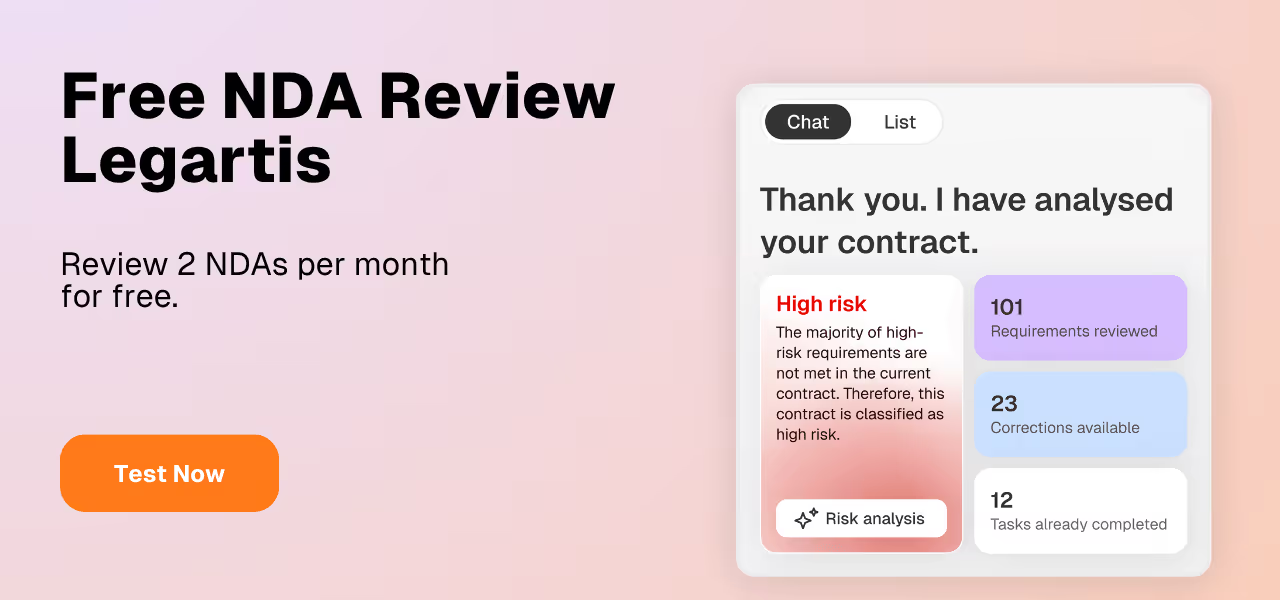

Automated Contract Review: AI is the Future

Contract Intelligence: Why Stored Knowledge No Longer Creates Competitive Advantage

Start withLegartis Today!

Talk to us about your business case or test Legartis right away!